This will be brief-ish, since the issues are the same as those addressed re: the Stanford tagger in my last post, and the results are worse.

I’ve again used out-of-the-box settings; like Stanford, TreeTagger uses a version of the Penn tagset. A translation table is available, as is a list of basic tags I’m using for comparison.

As with Stanford, there are a couple of reasons to expect that the results will be worse than those seen with MorphAdorner. There’s the tokenizer again (TreeTagger breaks up things that are single tokens in the reference data), and there’s the non-lit training set. Plus the incompatibility between the tagsets. As before:

- New: TreeTagger has a funky ‘IN/that’ tag, which might be translated as either ‘pp’ or ‘cs’ (where ‘cs’, subordinating conjunction, is already rolled into ‘cc’, conjunction, in my reduced tagset). I’ve used ‘pp’, which should therefore be overcounted, while ‘cc’ is undercounted.

- TreeTagger/Penn has a ‘to’ tag for the word “to”; the reference data (MorphAdorner’s training corpus) has no such thing, using ‘pc’ and ‘pp’ as appropriate instead.

- TreeTagger uses ‘pdt’ for “pre-determiner,” i.e., words that come before (and qualify) a determiner. MorphAdorner lacks this tag, using ‘dt’ or ‘jj’ as appropriate.

- Easier: TreeTagger uses ‘pos’ for possessive suffixes, while MorphAdorner doesn’t break them off from the base token, and contains modified versions of the base tags that indicate possessiveness. But since I’m not looking as possessives as an individual class, I can just ignore these, since the base tokens will be tagged on their own anyway.

- Also easy-ish: TreeTagger doesn’t use MorphAdorner’s ‘xx’ (negation) tag. It turns out that almost everything MorphAdorner tags ‘xx’, TreeTagger considers an adverb, so one could lump ‘xx’ and ‘av’ together, were one so inclined.

Data

Table 1: POS frequency in reference data and TreeTagger’s output

POS Ref Test Ref % Test % Diff Err % av 213765 226125 5.551 5.830 0.279 5.0 cc* 243720 167227 6.329 4.312 -2.017 -31.9 dt* 313671 292794 8.145 7.549 -0.596 -7.3 fw 4117 519 0.107 0.013 -0.094 -87.5 jj* 210224 262980 5.459 6.781 1.322 24.2 nn 565304 642627 14.680 16.570 1.890 12.9 np 91933 162270 2.387 4.184 1.797 75.3 nu 24440 17668 0.635 0.456 -0.179 -28.2 pc* 54518 91877 1.416 2.369 0.953 67.3 pp* 323212 371449 8.393 9.577 1.184 14.1 pr 422442 386668 10.970 9.970 -1.000 -9.1 pu 632749 555115 16.431 14.313 -2.118 -12.9 sy 318 100 0.008 0.003 -0.006 -68.8 uh 19492 6063 0.506 0.156 -0.350 -69.1 vb 666095 650441 17.297 16.771 -0.526 -3.0 wh 40162 44428 1.043 1.146 0.103 9.8 xx 24544 0 0.637 0 -0.637 -100 zz 167 13 0.004 0.000 -0.004 -92.3 3850873 3878364

* Tag counts for which there is reason to expect systematic errors

Legend

POS = Part of speech (see this previous post or this list for explanations)

Ref = Number of occurrences in reference data

Test = Number of occurrences in output

Ref % = Percentage of reference data tagged with this POS

Test % = Percentage of output tagged with this POS

Diff = Difference in percentage points between Ref % and Test %

Err % = Percent error in output frequency relative to reference data

Pictures

And then the graphs (click for large versions).

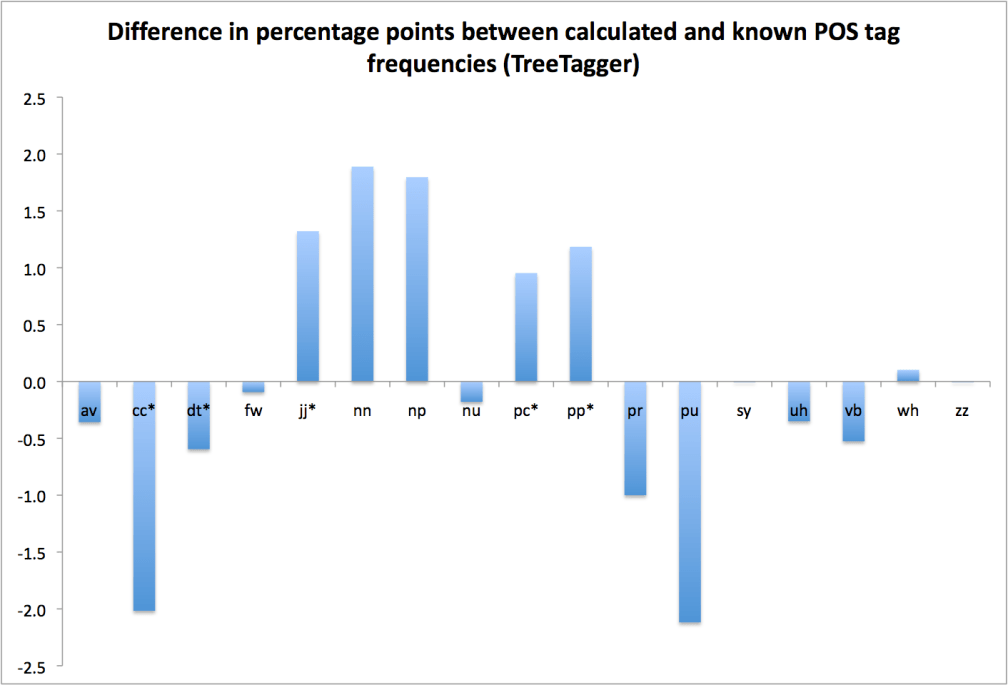

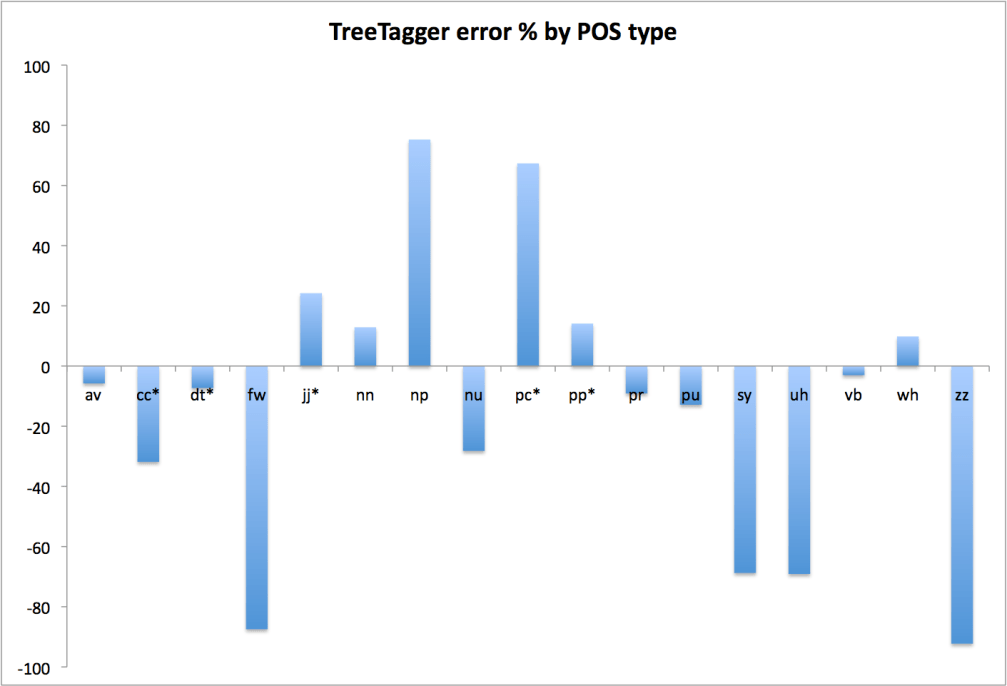

Note: These graphs are corrected for the xx/av problem discussed above; ‘xx’ tags in the reference data have been rolled into ‘av’ here.

Figure 1: Percentage point errors in POS frequency relative to reference data

Figure 2: Percent error by POS type relative to reference data

Note on Figure 2:

The bubble charts I’ve used in previous posts are a pain to create; this is much easier and, while not quite as useful for comparing weightings, is good enough for now, especially since the weightings don’t change between taggers (they’re based on tag frequency in the reference data). Note, too, that there’s no log scale involved this time.

Discussion

Ignoring the problematic tags (cc, dt, jj, pc, and pp), things are still pretty bad. Nouns, common and proper alike, are significantly overcounted, verbs and pronouns are undercounted. Rarer tokens (foreign words, symbols, interjections) are a mess, but that’s to be expected. Overall, the error rates are in the neighborhood of Stanford, but a bit worse.

The same caveats concerning the limits of translating between tagsets are in place here as were true in the Stanford case, but again, it’s hard to see how any of this could be construed as better than MorphAdorner.

Takeaway point: TreeTagger looks to be out, too.

One thought on “Evaluating POS Taggers: TreeTagger Bag of Tags Accuracy”